Cipher Strength and Key Length

What Does It Mean If A Cipher Is "Strong"?

We want to protect the confidentiality of some data, which means we need to encrypt it. But which cipher should we choose? This page explains how to select a cipher that will be strong enough. Security relies on more than simply selecting the cipher, we also must be careful of how we use it and how we generate and manage the keys.

A cipher algorithm is strong if:

- Given some pairs of plaintext and corresponding ciphertext, an attacker can only guess at the key used, even if they have a copy of the algorithm. Knowledge of a strong algorithm provides no advantage to the attacker.

- You can't search for "close" keys. Popular movies and TV shows to the contrary, decryption of real algorithms is all-or-nothing. No partial messages start to appear. If your key is off by just one bit, the output appears to be essentially random.

- A slight change in the plaintext or in the key causes a drastic change in the ciphertext. That is termed confusion. The drastic change is not localized but is spread throughout the resulting ciphertext. That is termed diffusion.

Note that each of those features implies the others. For strong asymmetric cryptography, an additional feature is:

- Given a copy of the algorithm and one of the keys in the pair, an attacker can only guess at the other key.

Inventing New Cryptosystems and Calling Them "Strong"

What if someone says that they just invented a new and very secure cipher algorithm?

That means that they must be either a mathematical genius or a fool. Which is the far more likely explanation?

What if someone says that a cipher algorithm must be kept secret for security?

We have known that "security through obscurity" is ineffective for well over a century. Auguste Kerckhoffs (1835-1903) mathematically proved that the security of a cryptosystem must not depend on keeping its algorithm secret, as discussed on a previous page. See his article "La cryptographie militaire", from Journal des sciences militaries, vol IX, pp 5-38, Jan 1883.

19th century computing pioneer Charles Babbage wrote the following in his autobiography, Passages from the Life of a Philosopher:

One of the most singular characteristics of the art

of deciphering is the strong conviction possessed by every

person, even moderately acquainted with it, that he is able

to construct a cipher which nobody else can decipher.

I have also observed that the cleverer the person,

the more intimate is his conviction.

In my earliest study of the subject, I shared in this

belief, and maintained it for many years.

In a conversation on that subject which I had with the

late Mr. Davies Gilbert, President of the Royal Society,

each maintained the he possessed a cipher which was

absolutely inscrutable.

On comparison, it appeared that we had both imagined

the same law.

Both men had re-invented the flawed autokey system of Cardano and Vigenère.

What "Flawed" or "Weak" or "Attacked" Might Mean

When cryptanalysts say than a cipher or hash algorithm is flawed or weak, or that it can be successfully attacked, they do not necessarily mean that it can be trivially broken. They mean that it was not quite as strong as we thought it was. The attack very likely remained theoretical rather than practical.

But keep in mind that a defined algorithm stays exactly the same for all time, while attacks only get better. The mathematical understanding and computer speed used in attacks will improve over time. A theoretical weakness now may turn into something very serious in the future.

Strong and Weak Keys

The key must be hard to guess — for example,

something better than the binary representation of the

string secret — and so they need to look

fairly random.

English text has little randomness. The entropy or unpredictability of typical English text is between 1.0 and 1.5 bits per character, as low as 0.6 to 1.3 bits per character for some samples. [Shannon, Claude E.: "Prediction and entropy of printed English", The Bell System Technical Journal, 30:50-64, January 1951]

What's more, some algorithms have some specific key patterns

that are called weak.

These weak keys inadequately obscure the cleartext information,

the ciphertext leaks information about the cleartext or

about the key itself.

These weak keys are typically those with large blocks of

all zeros or all ones, or large blocks of alternating

010101010101...

or

001100110011...

or similar.

Keys should appear to be highly random binary patterns.

For example, a randomly generated 64-bit key

in hexadecimal (base 16):

79baed51c147dc5d

And the binary equivalent, broken into 8-bit blocks:

01111001 10111010 11101101 01010001 11000001 01000111 11011100 01011101

Functions like bcrypt, Argon2, PBKDF2, and scrypt can be used for key stretching, making a human-selected passphrase much resistant to attacks.

Why is Triple-DES only 112 bits, when DES is 56 bits?

Oh, it's even worse than that.

But first, realize that worrying about 3DES is like rearranging

deck chairs on a sinking coal-fired steamship calling

CQD

or SOS

on a spark-gap transmitter.

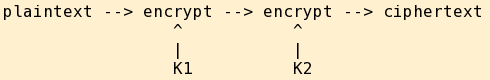

Encrypting twice in a row, using the same algorithm and two keys, does produce ciphertext:

However, if the encryption algorithm is what is called a cryptographic group, then there exists some third key K3 where a single encryption with K3 alone produces the same ciphertext. Consider this from the attacker's point of view — all you need to decrypt is K3, so double encryption as above uses twice the work and provides no more security than one encryption step!

Even if the cipher is not a cryptographic group (and DES is not), there is something called a meet in the middle attack that makes the sequence of encryption operations no more secure against a brute-force attack than just encrypting it once.

OK, so chaining multiple encryption steps is not useful...

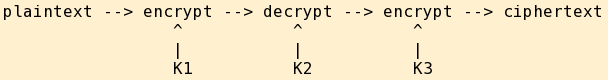

Triple-DES works by encrypting with one key, then decrypting with a second key, and then encrypting with a third key:

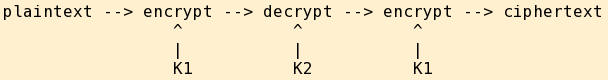

Yes, for DES those are three 56-bit keys and 3×56 = 168 bits. However, due to that pesky meet in the middle attack mentioned above, the complexity (meaning the computational work required) for an attacker is no greater than it would be for encrypting with one key, decrypting with the second, and then encrypting again with the first key:

Since you get no better security against determined cryptanalysis, the effective strength is no better than that of a 112-bit symmetric key no matter if you use (K1,K2,K3) or just (K1,K2,K1) as above. [see ref 1 below]

Now, in 1990 a known-plaintext attack on two-key 3DES was found, and US NIST rates two-key (K1,K2,K1) 3DES at 80 bits equivalent strength, and three-key (K1,K2,K3) 3DES at 112 bits equivalent strength. [see ref 2 below, and note the vast amounts of plaintext-ciphertext data required!] I find it very interesting that the NSA's formerly classified Skipjack cipher has an 80 bit key. The coincidence of 80 bit key length is a bit striking....

Also see the well-written Wikipedia article on Triple DES, which points to further papers.

References:

[1] R. C. Merkle and M. Hellman,

"On the Security of Multiple Encryption",

Communications of the ACM,

vol 24, no 7, 1981, pp 465-467.

This is summarized as

"Triple encryption, with three independent keys, is as secure

as one might naively expect double encryption to be"

in Bruce Schneier,

Applied Cryptography,

John Wiley, 1996,

ISBN 0-471-12845-7,

sections 11.3 and 15.1-15.3.

[2] "A known-plaintext attack on two-key triple encryption",

van Oorschot, et al., EuroCrypt '90,

and

NIST Special Publication 800-57,

"Recommendation for Key Management",

Part 1,

Part 2,

and

Part 3.

OK, So How Long Are The Keys?

The algorithms work differently, so you cannot simply compare key lengths to decide which is more secure against a brute-force search.

Very generally speaking, to get the same strength as an N-key symmetric cipher, an elliptic curve algorithm or a cryptographic hash needs to have a key 2N times as long, and an asymmetric cipher needs to have a key roughly 10N times as long or longer. Sorry, that's just the way the math goes. This is why asymmetric encryption is nice for key management, but symmetric algorithms are then used for bulk data encryption. [See section 1.8.4 of Handbook of Applied Cryptography, Menezes, van Oorschot, and Vanstone, 1997, available as a free PDF download]

One analysis of this is found in Applied Cryptography, Bruce Schneier, ISBN 0-471-12845-7. Thanks to Naftali Fasten for a really nice summary of parts of its chapter 7. A table based on that is:

| Symmetric Encryption | 56 | 64 | 80 | 112 | 128 | 256 | 448 | 1024 |

| Cryptographic Hash | 112 | 128 | 160 | 224 | 256 | 512 | 896 | 2048 |

| RSA Asymmetric Encryption | 384 | 494 | 768 | 1792 | 2304 | 10753 | 39031 | 234205 |

And, from NIST and NSA:

| Symmetric encryption | 80 | 112 | 128 | 192 | 256 |

| Elliptic Curve asymmetric encryption | 160 | 224 | 256 | 384 | 512 |

| RSA asymmetric encryption | 1024 | 2048 | 3072 | 7680 | 15380 |

Also see

RFC 5480

and the manual page for the

ssh-keygen

program.

And now, some specific examples of cipher algorithms and their key lengths:

| Algorithm | Key Length | Notes |

| DES | 56 | No longer considered secure enough against brute-force attacks, because 256 = 72,057,594,037,927,936 is not a big enough search space given today's hardware. See the EFF project to build dedicated DES-cracking hardware. |

| FEAL | 64 | |

| LOKI | 64 | LOKI89 is insecure, LOKI91 is better |

| Skipjack | 80 | Widely speculated to have an NSA back door when it was to have been in the Clipper chip that would have been widely installed in hardware. |

| 3DES | 112, 168 | |

| IDEA | 128 | Patented, requires license |

| REDOC II | 160 | Patented, requires license |

| CAST-128 or CAST5 | 40–128 | Available royalty-free worldwide for commercial and non-commercial applications, despite earlier CAST work being patented. |

| CAST-256 or CAST6 | 128–256 | Available royalty-free worldwide for commercial and non-commercial applications, despite earlier CAST work being patented. |

| AES | 256 | Advanced Encryption Standard, replaces DES. Algorithm was originally known as "Rijndahl" |

| GOST | 256 | Государственный Станарт Союза ССР, Gosudarstvennyy Standart Soyuza SSR, Russian for "Government Standard of the Union of Soviet Socialist Republics", which dates it |

| Kalyna | 256 | Калина was adopted as the national encryption standard of Ukraine in 2015. See the authors' paper at eprint.iacr.org and the other submissions to the Ukrainian national public cryptographic competition. |

| RC6 | 128–256 | |

| Twofish | 128–256 | See: Twofish |

| Blowfish | 32–448 | See: Blowfish |

| Khufu | 512 | |

| Khafre | 512 | |

| RC2 | 8–1024 | Patented, proprietary algorithm, requires license |

| RC4 | 40–2048 | A stream cipher, unlike the block ciphers otherwise making up this list. (see other stream ciphers here) Optionally usable in TLS/SSL, but recommended against as weaknesses are well known as described here. |

| RC5 | 128–2040 | Variable length key, up to 2040 bits |

| REDOC III | up to 20,480 | Patented, requires license |

Modes Of

Operation

Block ciphers must be used in some specific mode — Electronic Codebook or ECB (usually very insecure), Cipher Block Chaining or CBC, Cipher Feedback or CFB, Output Feedback or OFB, Counter or CTR, Galois/Counter Mode or GCM, and more.

The Wikipedia page has a basic overview. For the full details see "Evaluation of Some Blockcipher Modes of Operation" by Phillip Rogaway, University of California, Davis. It's a 159–page evaluation carried out for the Cryptography Research and Evaluation Committees for the Government of Japan.

| Algorithm | Key Length | Notes |

| DSA | 1024 | U.S. government standard for a component of digital signatures |

| ECDSA | 1024 | U.S. government standard for a component of digital signatures |

| El Gamal | 1024–4096 | Used in GNU Privacy Guard. Key size is variable, 1024–4096 bit keys are used in GnuPG. |

| RSA | typically 1024–4096 | At least 2048 bits are recommended today. Your computer should be fast enough to comfortably handle that key size or larger, while your attacker's computer might be getting close to being able to discover 1024-bit keys. |

A number of Elliptic Curve Cryptography algorithms are available. For a while it seemed that ECC provided a backup in case a general-purpose quantum computer was finally developed. But in 2015 the NSA announced that post-quantum cryptography would need some other basis. A Post-Quantum Crypto conference series was already running. Key sizes will be large, at least several thousand bits and ranging up to several million bits for some algorithms, and much study remains to investigate their theoretical security.