Syslog Over TLS

Collect Logs Safely With Rsyslog and GnuTLS

Download Oracle Linux Download FreeBSD

Log collection on Linux and other operating systems

can become complex.

However, we need to do it carefully,

as the information collected in logs helps us to

detect and analyze security issues,

and to troubleshoot a wide variety of problems

with network misconfiguration, failing hardware, and so on.

You can even get into trouble for not doing it correctly.

You may have legal or regulatory requirements

to collect and protect logs

in demonstrably safe and reliable ways.

On this page I explain what I had to do to satisfy the

STIG

or Security Technology Implementation Guide,

a U.S. Department of Defense requirement.

The STIG goes so far as to specify a required component

of the solution, and some specific configuration details.

Unfortunately, it cites some outdated syntax.

I'll show you how it should be done.

Many of the compliance requirements for protecting

sensitive personal information in various fields

say that log collection and protection is required,

although they don't specify exactly how.

PCI-DSS is an international industry-wide requirement

for payment card (credit, debit, etc) information.

In the U.S.,

HIPAA is about medical and related information,

GLBA applies to financial institutions

(banks and credit unions),

and so on.

The compliance requirements generally have verbiage about

how log information is important

so it must be carefully collected and protected.

They won't say exactly how.

"Industry best practices"

will be the usual non-explanation.

Let's see how to meet the U.S. DoD requirement,

which should also satisfy reasonable alternatives.

What Are These Logs?

Individual subsystems can maintain their own logs. For example, a web server process certainly will. However, the operating system environment can also provide general-purpose log collection.

A busy server providing a variety of network services

will collect a lot of log data!

Since the email service,

the print service,

the web service,

and all the other network daemons

came from separate projects,

their native logs will vary widely.

All the logs will generally contain plain text,

one line per event,

so you can easily analyze them with well-known tools like

grep,

sed,

awk,

sort,

and so on.

However, the syntax and semantics of those one-line reports

will vary from one service to another.

Let's say you start a web server process

and let it run for a few hours.

Many clients request pages during that time.

Some of the requests will be for

pages that don't actually exist, and the server will send

HTTP "404: Not found"

error results to the client.

The web server process might classify a few of the requests

as inappropriate and even hostile.

Maybe an enormously long pathname in an attempt to do

a buffer-overflow exploit against the server.

Then you shut down the web server process.

All of those events — the service start and stop, all the successful and failed requests, and the exploit attempts — would be directly recorded by the web server in log files specific to that one service.

We want to have a centralized process collecting the most interesting log events for all services running on the system. So, when the web server process starts and stops, plus the exploit attempts, but not the routine operational messages about successful and failed page requests. That's why we have syslog.

Syslog is a General Log Collection Service

The syslog message logging standard grew out of the Sendmail project in the 1980s when that was one of the first Mail Transfer Agents on the Internet. Syslog quickly became the de facto standard logging method on UNIX-like operating systems, soon spreading to other operating systems and to network devices such as routers, managed switches, printers, and so on.

A syslog daemon or privileged process listens for messages sent to it. Each message has a facility, a priority, and the message content.

Amazon

ASIN: 1803249412

The facility

is intended to divide the messages into categories.

An auth message would report a user authentication

event, successful or failed.

An authpriv message would also be about user

authentication events, but it would contain more sensitive

information and need special protection.

A daemon message would report when a

privileged daemon process starts, stops,

or detects a reportable event.

A kernel message would be from the core of the

operating system itself, reporting events such as the

discovery of a piece of hardware or

errors caused by failing hardware.

I say that the facility "is intended to" specify a category,

because the concepts and the specific facility names

became frozen in place back in the mid 1980s.

There was no web service back then,

security was far less of a concern,

but

news

and

uucp

were important.

Today, however, who is going to schedule a job to run

after 11 PM when the long-distance telephone rates

are lower, dialing out with a modem

to contact another system to transfer

USENET

messages over

UUCP?

The priority

specifies how important the reporting agent considers the

message to be.

Priority ranges through eight levels

from debug,

which would include messages that seem rather trivial,

through emerg which stands for "emergency".

You configure a syslog service by telling it what to save,

and where.

To save all auth messages of priority

info and higher into one file,

and all

kernel messages into another file:

auth.info /var/log/authlog kern.* /var/log/kernel

Programmers adding syslog messaging to an application generally make reasonable decisions about how to assign priority to events. The facility, however, sometimes seems to be oddly chosen, especially in the case of activities that didn't exist in 1990. There's a fair amount of "Well, that's how it's always been", and I think some cases where code authors decided to use a facility that seemed to be under-utilized.

Amazon

ASIN: 0596518161

The kernel includes a mechanism for it to send

a message to a listening syslog process.

With Linux this is the

printk()

function.

Programs written in C/C++ can use the

syslog()

function in the standard C library.

A language can provide a function in its own libraries or

modules, as is the case with

Python,

PHP,

and others.

Shell scripts can use the

logger

command.

So, the operating system itself and any process running on it can send messages to a listening syslog process. The syslog service can also listen for messages coming in over the network, and it can relay messages to another syslog process running on a remote machine, if you choose to set it up that way.

The initial network support was limited to UDP port 514. The messages are small and frequent, so UDP made sense at the time. However, UDP delivery isn't guaranteed, and even if all the messages get there, they might arrive out of order. So, syslog implementations began to support reliable connections over TCP, usually port 6514. And soon after that, the point of this page — over connections protected by TLS.

And that gets us to the range of available components....

Background of the Components

You will get syslog when you install a UNIX-family operating system. But since the late 1990s, extended versions will also be available.

For example, FreeBSD includes a fairly traditional

syslog server program as /usr/sbin/syslogd.

But two more capable alternatives are available,

syslog-ng and rsyslog.

On a current Linux system, you will get rsyslog by default

but you could choose to replace it with syslog-ng.

Rsyslog has largely won the popularity contest,

but both are frequently available.

Syslog-ng was first released in 1998. It added reliable transport with TCP, better timestamp handling, and other improvements. It added protection with TLS in 2008.

Rsyslog was first released in 2004. It included cryptographic protection from the early years, adding TLS support before 2008. Red Hat became interested in the early versions. That led to most current Linux distributions using rsyslog by default while usually offering syslog-ng as an option that you could manually install.

Rainer Gerhards is the primary author of rsyslog. He also wrote RFC 5424 describing the Syslog protocol, replacing RFC 3164.

Rsyslog documentation is limited and out of date. The project's TLS tutorial says it was written in May 2008. It speaks of a document that was a predecessor of RFC 5425, which formalized Syslog over TLS in March 2009, as a dormant project that might yield an RFC in the future. It refers to a more detailed document, but the supposed link to it returns "Invalid file specified". The pages that exist are of old design, apparently optimized for viewing on enormously heavy and hot cathode-ray tube displays. I speculate on whether the server runs SCO or Irix. The page content is largely hidden by all the banner ads.

You can install the rsyslog-doc package

and explore /usr/local/share/doc/rsyslog-doc/html/.

However, that just provides local copies of documents

from 2008 and soon after, containing broken links.

Rsyslog is extremely useful if you can become confident that you understand how it really works. It's 2025 now, and you have some documentation that was incomplete 17 years ago, so...

If you stick to the maintained documentation, avoiding the listed tutorials, you will probably be OK. Go to the main rsyslog.com page, go to the "Current Version" box at right, and click the "Doc" link. It seems to be a large collection of rather small pages, with the majority of the screen area filled by banner ads. Maybe that's why Google has done such a poor job of indexing it, instead sending you to forum posts by frustrated people over the past twenty years. The search results largely take you to discussions of long-deprecated syntax that didn't work back then.

OpenSSL 3.0.0 released under Apache License 2.0 in September 2021. The Apache Software Foundation says that "The Free Software Foundation considers the Apache License, Version 2.0 to be a free software license, compatible with version 3 of the GPL. [...] Apache 2 software can therefore be included in GPLv3 projects, because the GPLv3 license accepts our software into GPLv3 works. However, GPLv3 software cannot be included in Apache projects. The licenses are incompatible in one direction only, and it is a result of ASF's licensing philosophy and the GPLv3 authors' interpretation of copyright law."

As for cryptography, the OpenSSL project was founded in 1998. It provides a command-line tool and a library of cryptographic functions that applications can use.

Richard Stallman had a meltdown over how OpenSSL was open source but came with a license description he didn't prefer. So, the GnuTLS project was initially started around March–November 2000.

I strongly prefer OpenSSL over GnuTLS, partly because it's the opposite of what Stallman wants. I know that OpenSSL undergoes constant careful examination for security problems and other bugs. As for GnuTLS, I know that I'm not the only person with the impression that its primary goal is supporting an entirely Stallman-approved pure GNU environment.

However, the U.S. DoD specifies GnuTLS,

probably because that was the first implementation of TLS

that rsyslog supported,

thus the first syslog+TLS solution that Red Hat used,

and thus what the DoD fossilized in place.

So, the following assumes that you have added the

rsyslog-gnutls

package, which provides the shared library

/usr/lib64/rsyslog/lmnsd_gtls.so

and, of course, no further documentation.

Why Cryptography?

Cryptography in the form of TLS protects the confidentiality of log message content, authenticates the end points, and helps to support the availability of the logging service.

You will see CIA used as shorthand for information security goals. It may be easier to remember as that's also the name of a government agency, but the acronym was designed by the Department of Defense to also indicate priority. Confidentiality or maintaining secrecy is the most important security feature, then Integrity or accuracy is next, and Availability or reliability is the third most important.

Yes, keeping secrets is the most important thing. There's a chance that those secrets will actually be wrong, and it's even more likely that we will lose them, but that was the decision over a half-century ago.

As for why we need confidentiality,

consider what happens when you're trying to log in but

you accidentally hit the <Enter> key

in the middle of entering your user name.

You're on autopilot, because you have to authenticate

over and over every day.

You don't notice that it has switched to asking you for your

password, while you finish typing your user name and

pressing <Enter> on purpose.

Let's say it's my user name cromwell

but my fumbled typing converted that to a supposed

user crom with a password of well.

Now you're entering your super-secret password as a user name,

and pressing <Enter> just before you

realize what you've done.

"Well, that was silly of me", you think,

as you get back in sync and do it the correct way.

But consider what that episode sent into the log:

A failed login for nonexistent user crom,

a failed login for some jumble of gibberish containing

digits and punctuation marks,

then a successful login for existing user cromwell,

all of that on the same device or from the same remote

IP address.

Anyone who can capture the cleartext syslog traffic or

read the resulting log file now knows that the gibberish

string is the cromwell user's password.

Authentication failures are far more sensitive

events than the successes.

That's why authentication failures are facility

authpriv so they can saved in a file

that only root can read,

while successful authentication events,

session locks and unlocks, and session terminations

are facility auth and can go into more

broadly viewable storage.

As for availability, as a general rule we don't have cryptographic tools to provide that. But this is a case where cryptography can be a great help. Our concern is that if an attacker could flood data into a log collector, he could completely fill the file system where it's stored. Denial of service against logging! Then an intrusion might be successful while leaving no tracks of exactly what happened.

Cryptography can provide authentication of hosts, and that means that only approved sources of syslog messages are allowed to send log data. The attempted data flood would be rejected. So, we want to authenticate the syslog sources, the clients in terms of syslog messages.

We just concluded that syslog messages may be sensitive, and we don't want to send them to just any random device using the log collector's IP address at the moment. So, we also want to insist that the log collector, the server in terms of syslog messages, also authenticates itself to the clients. Mutual authentication: the collector proves its identity to the sender, and the sender proves its identity to the collector, both direction based on cryptography.

TLS also provides data integrity protection, although that's not nearly as urgent given all the lower level protocols of TCP, IP, and Ethernet include their own checksums.

How To Authenticate?

Each host will have an asymmetric key pair, and its public key will be protected within a digital certificate. That's a data structure that contains the public key itself along with information about the certificate's owner, some time period of validity, and more, along with a digital signature of all that created by a Certificate Authority or CA.

Every host will have the CA's certificate, which is a effectively the CA saying "This is my public key, and you should believe me because I say it is."

Your in-house PKI or Public-Key Infrastructure group will set up all the key pairs and certificates.

So, each host ends up with the CA's public key certificate, and that host's private key, plus that host's public key as a CA-signed certificate. The hosts will use the asymmetric cryptography to negotiate a shared session key, and then apply that to symmetric encryption of the message stream.

Once all that is set up, we must decide how the host authentication logic will work. Our choices are:

-

x509/anon— The two endpoints can agree on a way to encrypt the message stream, although there's no indication of either end's identity or legitimacy. -

x509/certvalid— One endpoint can:- Require the other to present its certificate,

- Verify the embedded signature, using the trusted CA public key known from the stored CA certificate, and

- Require the other to answer a cryptographic challenge verifying that the remote host holds the corresponding private key.

-

x509/name— Likex509/certvalidplus verifying that the remote host matches the CN or Canonical Name field within its certificate. That means either trusting DNS to always be there and be correct, or adding IP addresses as SAN or Server Alternative Name values within the certificates.

x509/anon is obviously weak.

x509/name requires that each host,

each potential source of syslog messages,

must have its own certificate containing its hostname

and IP address(es).

Maybe you're already doing that as part of your

system deployment process,

to support IPsec or other security measures.

But if not, it's a lot of work for very limited benefit.

However, x509/certvalid

is a middle ground that should be adequate for many settings.

With that, there is one public/private key pair used by

all syslog clients,

with one shared certificate.

Remember that a digital certificate exists to contain a

public key,

and so it must be a public document to be useful.

An attacker would need the corresponding private key

to accomplish anything.

We're going to select cryptography for which we won't

need to worry about an attacker discovering the private key

by cryptanalysis.

The remaining opportunity would be to get root

access or otherwise violate file system security,

maybe by booting from rescue media.

The project I'm working for is focused on field-deployable

systems, where they want to be able to do a quick installation

from physically protected media.

x509/certvalid better matched the mission.

The Solution, Part 1: Cryptography

I will use OpenSSL to generate the keys and certificates, and then the GnuTLS library to protect rsyslog's communication.

-

Create a Certificate Authority or CA.

Do this on the machine that will be the CA:

-

Create the key pair.

I am using Elliptic Curve Cryptography or ECC

with the P-384 or secp384r1 curve

defined by NIST.

$ openssl genpkey -algorithm EC \ -pkeyopt ec_paramgen_curve:P-384 \ -pkeyopt ec_param_enc:named_curve \ -out RootCA.key

You could add the-aes256option to password-protect the CA keys. This makes things safer in terms of confidentiality, but it will require you to enter that passphrase for every client signature creation. -

Self-sign the CA certificates.

This creates a certificate that will be valid

for ten years:

$ openssl req -x509 -new -key RootCA.key \ -days 3650 -out RootCA.pem -subj "/C=US/ST=New\ York/L=New\ York/O=Whatever/OU=HQ/CN=ca.whatever.example.org/"

Modify thesubjstring as appropriate for your organization's country, state, location, name, and DNS domain. Notice that you must escape embedded spaces. If you leave off that optional parameter, it will ask you to answer a series of questions. -

Examine the result:

$ openssl x509 -text -noout -in RootCA_EC.pem | less

-

Create the key pair.

I am using Elliptic Curve Cryptography or ECC

with the P-384 or secp384r1 curve

defined by NIST.

-

Create a host key pair and certificate.

Notice that I am creating just one key pair to be

used by all client hosts, and am using the

domain

whatever.example.orgas the CN or Canonical Name.-

Create the client key pair:

$ openssl genpkey -algorithm EC \ -pkeyopt ec_paramgen_curve:P-384 \ -pkeyopt ec_param_enc:named_curve \ -out client.key

-

Create a CSR or

Certificate Signing Request.

Notice that I am using just the DNS domain

as the CN or Canonical Name:

$ openssl req -new -key client.key \ -out client.csr \ -subj "/C=US/ST=New\ York/L=New\ York/O=Whatever/OU=HQ/CN=whatever.example.org/"

-

Create the client certificate:

$ openssl x509 -req -in client.csr \ -CA RootCA.pem -CAkey RootCA.key \ -CAcreateserial -days 3650 -out client.crt

-

Create the client key pair:

-

Distribute the keys.

Each host needs its private key file,

its certificate,

and the CA's certificate.

It's up to you to decide where to store them.

However, it makes sense to use

/etc/ssl/privatefor the private key and/etc/ssl/certsfor the certificates. That's what I'll show below.

The above will work fine with

x509/certvalid authentication.

For x509/name you must repeat the above

major block 2 for each host while

replacing "client" with the hostname and then

using the hostname as the CN value and

adding an additional parameter to specify the SAN

or Subject Alternative Name.

Something like the following if

thishost and 1.2.3.4

are the specific hostname and IP address

I'm handling here:

$ openssl genpkey -algorithm EC \ -pkeyopt ec_paramgen_curve:P-384 \ -pkeyopt ec_param_enc:named_curve \ -out thishost.key $ openssl req -new -key thishost.key \ -out thishost.csr \ -subj "/C=US/ST=New\ York/L=New\ York/O=Whatever/OU=HQ/CN=thishost/" -addext "subjectAltName = DNS:thishost.whatever.example.com,IP:1.2.3.4" $ openssl x509 -req -in client.csr \ -CA RootCA.pem -CAkey RootCA.key \ -CAcreateserial -days 3650 -out thishost.crt

The Solution, Part 2: Rsyslog Configuration

This is already complicated enough! We don't want to make this any harder to maintain.

Many Linux utilities, including rsyslog,

now support having a primary configuration file

and an associated directory holding

further configuration pieces.

For rsyslog those are /etc/rsyslog.conf

and /etc/rsyslog.d/*.conf, respectively.

Updates or patches for the rsyslog package

will appear from time to time.

It's a crucial system utility that runs as root

so of course you want to apply patches!

However, if you have modified /etc/rsyslog.conf,

the package update process probably will be unable to

merge your changes.

Do not change /etc/rsyslog.conf.

Instead, create a new file in /etc/rsyslog.d/

with a name you select,

as long as it ends with .conf.

Configure The Log Collector (Server)

Store this in

/etc/rsyslog.d/tls-collector.conf

or similar:

# Log each client host in its own file in /var/log/clients/. If the # clients are logging a timestamp every minute, then the presence of # a file older than 60 seconds in /var/log/clients/* indicates a # crashed system or stopped rsyslog daemon. # # Also keep a merged list of all messages in /var/log/clients-all. # The following saves everything of level info and higher, which # should be plenty but you can change this. $template RemoteHost,"/var/log/clients/%HOSTNAME%" *.info ?RemoteHost *.info /var/log/clients-all # Use the gtls library from GnuTLS (versus ossl from OpenSSL) # with these keys and certificates. This assumes: # -- /etc/ssl/certs holds certificates, and # -- /etc/ssl/private holds private keys global( DefaultNetstreamDriver="gtls" DefaultNetstreamDriverCAFile="/etc/ssl/certs/ca.pem" DefaultNetstreamDriverCertFile="/etc/ssl/certs/collector.crt" DefaultNetstreamDriverKeyFile="/etc/ssl/private/collector.key" ) # Use TLS with x509/certvalid authentication. module( load="imtcp" StreamDriver.Name="gtls" StreamDriver.Mode="1" StreamDriver.Authmode="x509/certvalid" ) # Listen on the standard TCP/6514. input( type="imtcp" port="6514" )

Also do whatever is needed to allow the syslog messages through the firewall. Something like this:

$ su # firewall-cmd --permanent --add-service=syslog-tls # systemctl restart firewalld

Configure Each Log Sender (Client)

Store this in

/etc/rsyslog.d/tls-client.conf

or similar:

# Send a "Mark" message every 60 seconds module( load="immark" interval="60" ) # Use the gtls library from GnuTLS (versus ossl from OpenSSL) # with these keys and certificates. Change paths if you want, # but the private key must be unreadable by anyone but root! global( DefaultNetstreamDriver="gtls" DefaultNetstreamDriverCAFile="/etc/ssl/certs/ca.pem" DefaultNetstreamDriverCertFile="/etc/ssl/certs/sender.crt" DefaultNetstreamDriverKeyFile="/etc/ssl/private/sender.key" ) # Send everything (*.debug meaning all facilities level debug or higher) # and let the collector decide what to keep. Send it over TCP/6514 # with TLS with x509/certvalid authentication. *.debug action( type="omfwd" protocol="tcp" target="$ip" port="6514" StreamDriver="gtls" StreamDriverMode="1" StreamDriverAuthMode="x509/certvalid" )

This is where the U.S. DoD STIG document tells you to use deprecated syntax, instead using the following and other outdated syntax:

$DefaultNetstreamDriver gtls $ActionSendStreamDriverMode 1 *.* @@[remoteloggingserver]:[port] [... and so on...]

Some documentation I initially found through a Google search started with dire red bold content telling me to instead find the documentation for the current major release, and then immediately followed that with the announcement that what it was describing was already deprecated back when it was the current release.

The STIG also tells you to modify /etc/syslog.conf,

which you hopefully will notice (and then regret)

the next time the rsyslog package is patched.

Start Collecting Packets

I have an example packet trace in which the client (sender)

was 10.0.2.20 and the collector (server) was 10.0.2.9.

I captured it with

tcpdump —

limit buffering so the last packet always gets into the file,

save the trace into a file,

and capture all packets between the two hosts.

That way I'll see all the details of the connection,

including any ICMP packets if something doesn't get

started correctly.

$ sudo tcpdump --packet-buffered \ -w syslog-tls.pcap \ host 10.0.2.20 and host 10.0.2.9

Next, restart the rsyslog service

on the collector (server) and make sure that it is listening.

The important lines are highlighted.

This system also listens for connections over TCP/60

from a remote audit daemon,

which would send the SELinux audit events normally

stored locally in /var/log/audit/audit.log:

$ su # systemctl restart rsyslog # lsof -i COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME auditd 1039 root 12u IPv6 23200 0t0 TCP *:60 (LISTEN) chronyd 1079 chrony 5u IPv4 24041 0t0 UDP collector.example.org:323 chronyd 1079 chrony 6u IPv6 24042 0t0 UDP collector.example.org:323 sshd 1113 root 3u IPv4 24419 0t0 TCP *:ssh (LISTEN) sshd 1113 root 4u IPv6 24421 0t0 TCP *:ssh (LISTEN) rsyslogd 1390 root 4u IPv4 26189 0t0 TCP *:syslog-tls (LISTEN) rsyslogd 1390 root 5u IPv6 26190 0t0 TCP *:syslog-tls (LISTEN)

It's listening!

Now let me start the rsyslog service on the

sender (client) and verify that it connected.

On the client I do and see:

$ su # systemctl restart rsyslog # lsof -i COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME audisp-re 1057 root 4u IPv4 29727 0t0 TCP logclient:60->10.0.2.9:60 (ESTABLISHED) sshd 1337 root 3u IPv4 28245 0t0 TCP *:ssh (LISTEN) sshd 1337 root 4u IPv6 28254 0t0 TCP *:ssh (LISTEN) rsyslogd 1460 root 6u IPv4 29822 0t0 TCP logclient:45730->10.0.2.9:syslog-tls (ESTABLISHED)

And on the server:

# lsof -i COMMAND PID USER FD TYPE DEVICE SIZE/OFF NODE NAME auditd 1039 root 4u IPv6 29102 0t0 TCP collector:60->10.0.2.20:60 (ESTABLISHED) auditd 1039 root 12u IPv6 23200 0t0 TCP *:60 (LISTEN) chronyd 1079 chrony 5u IPv4 24041 0t0 UDP collector.example.org:323 chronyd 1079 chrony 6u IPv6 24042 0t0 UDP collector.example.org:323 sshd 1113 root 3u IPv4 24419 0t0 TCP *:ssh (LISTEN) sshd 1113 root 4u IPv6 24421 0t0 TCP *:ssh (LISTEN) rsyslogd 1390 root 4u IPv4 26189 0t0 TCP *:syslog-tls (LISTEN) rsyslogd 1390 root 5u IPv6 26190 0t0 TCP *:syslog-tls (LISTEN) rsyslogd 1390 root 13u IPv4 29104 0t0 TCP collector:syslog-tls->10.0.2.20:45730 (ESTABLISHED)

Yes! It has one connection over IPv4, and is listening for more on both IPv4 and IPv6.

Now I can interrupt the tcpdump capture

with <Ctrl-C> and then view it with

wireshark:

$ wireshark syslog-tls.pcap

It's Really TLS 1.3, But...

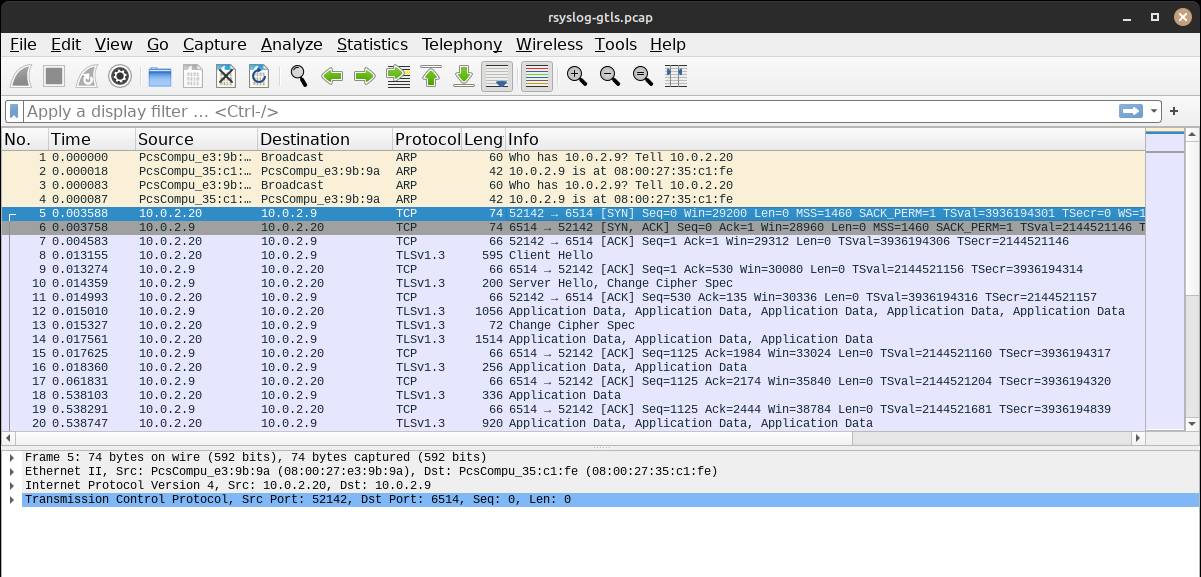

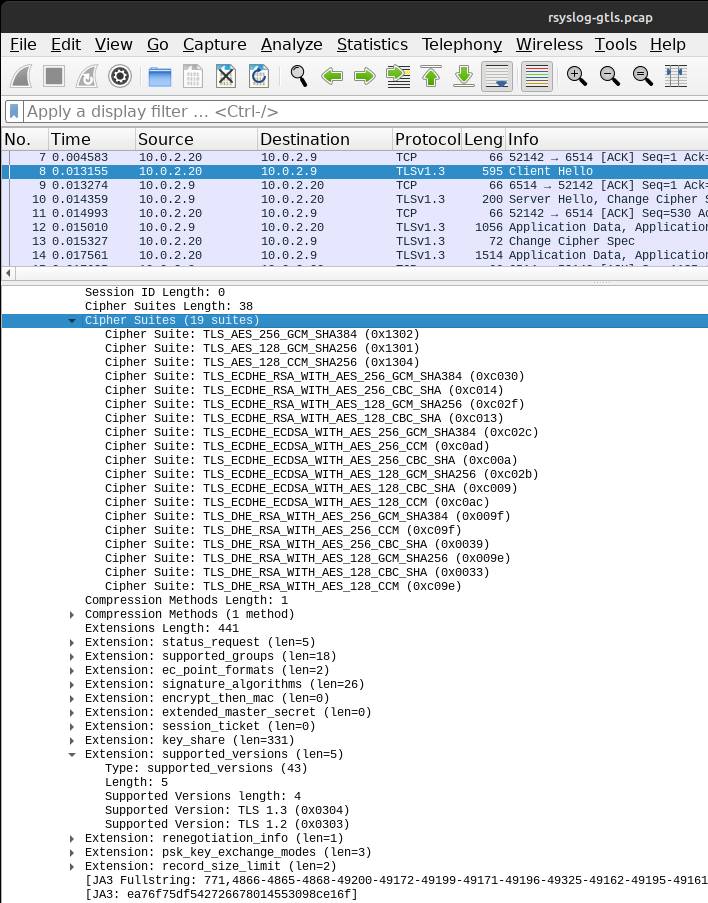

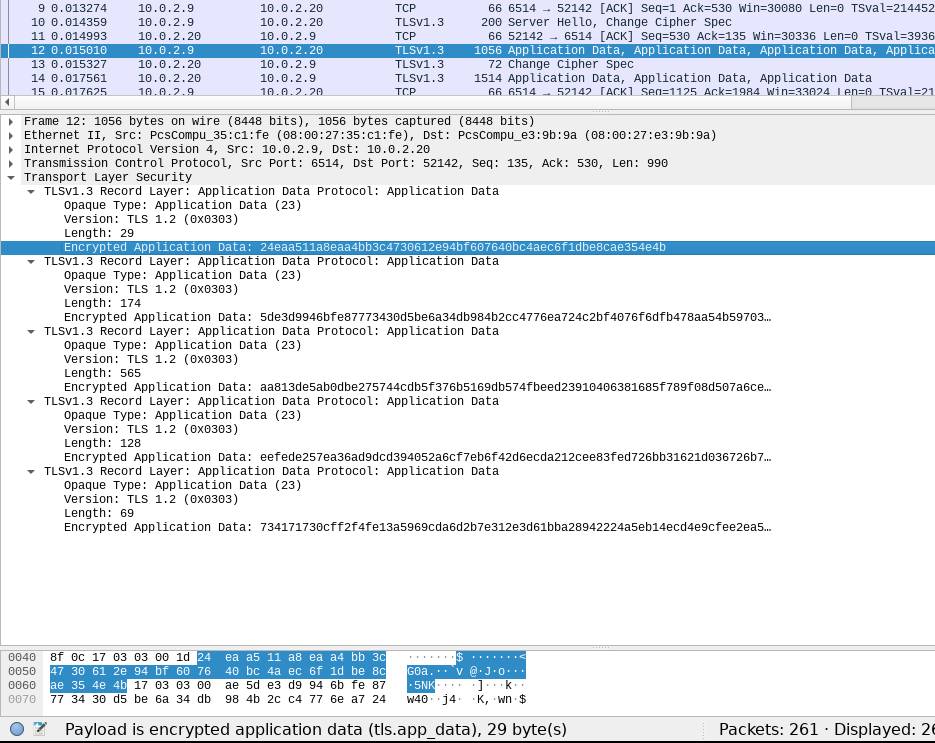

You may need to demonstrate and explain your solution. Here's a first look at my packet capture.

The top pane of Wireshark shows the client using ARP to get the server's Ethernet hardware address (packets 1-4), establishing a TCP connection (packets 5-7), the crypto negotiation (packets 8-11), and then the traffic is encrypted beyond that.

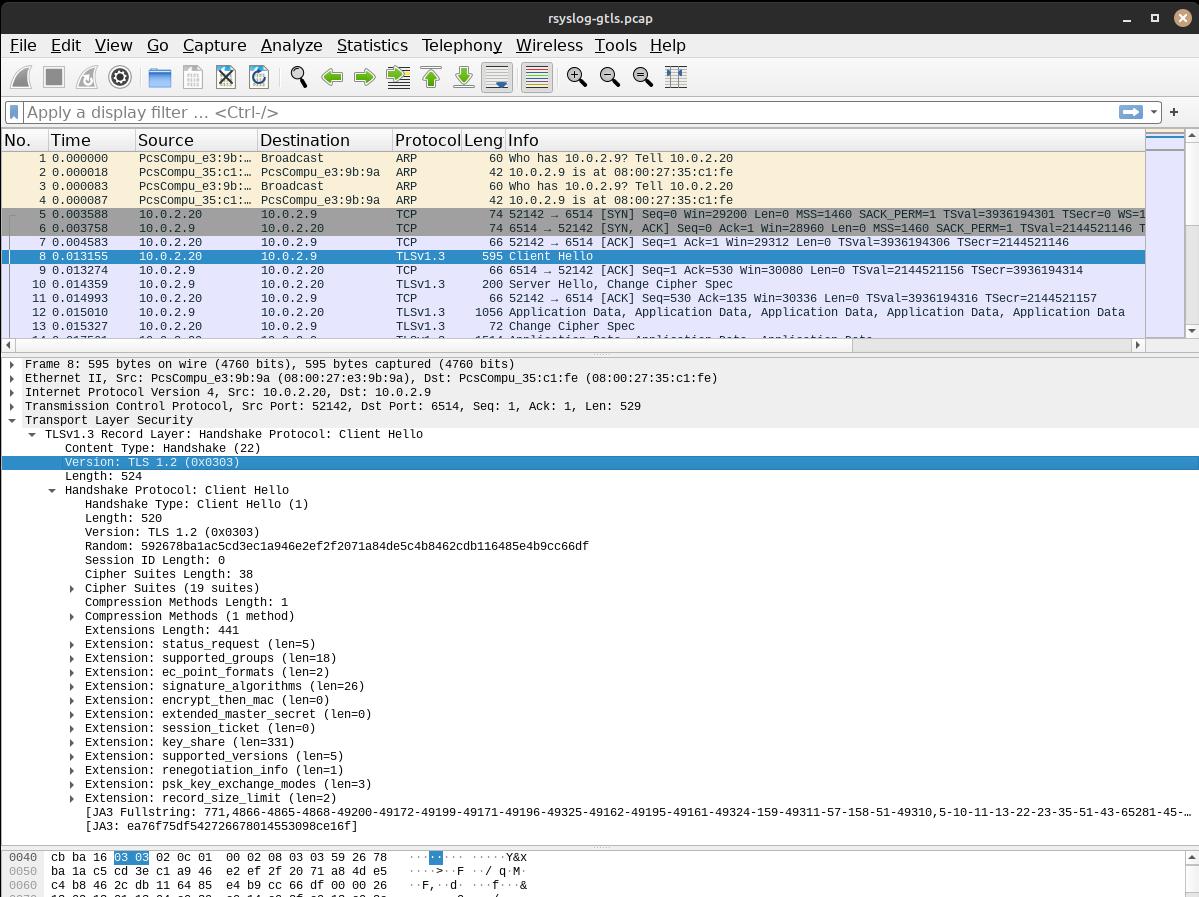

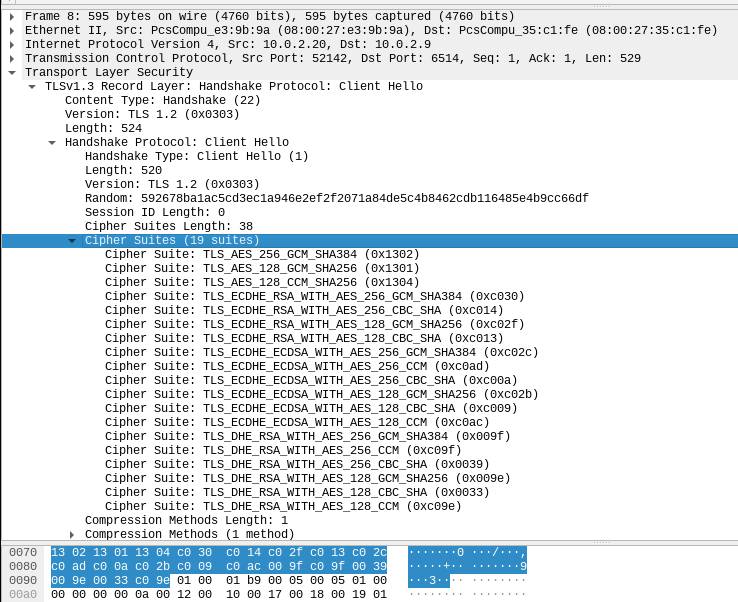

Expanding the Client Hello packet, it's a little confusing. The TCP layer just names the ports, from 52142 to 6514. Within the TLS payload, it's marked in two fields as 0x0303 or TLS 1.2. However, Wireshark presents this in the top pane as TLS 1.3.

If I expand the Cipher Suites section of the Client Hello, I see that it lists three out of the five defined TLS 1.3 cipher suites followed by sixteen TLS 1.2 suites. As per RFC 8446 defining TLS version 1.3, the five possible cipher suite definitions are:

| TLS_AES_128_GCM_SHA256 | {0x13,0x01} |

| TLS_AES_256_GCM_SHA384 | {0x13,0x02} |

| TLS_CHACHA20_POLY1305_SHA256 | {0x13,0x03} |

| TLS_AES_128_CCM_SHA256 | {0x13,0x04} |

| TLS_AES_128_CCM_8_SHA256 | {0x13,0x05} |

Scrolling and rearranging somewhat,

the Client Hello included a supported_versions

extension.

It is reporting that it supports

the cipher suites for both TLS 1.2 and TLS 1.3.

It has no idea that it is communicating with the same

versions of the

rsyslog and GnuTLS packages.

AES is the Advanced Encryption Standard cipher, originally known as Rijndael. It's a block cipher, which means that you can use it as a module in several different modes. TLS 1.3 only supports authenticated encryption, more formally known as AEAD or Authenticated Encryption with Additional Data. GCM and CCM are Galois/Counter Mode and Counter with CBC MAC or (deep breath) Counter with Cipher Block Chaining Message Authentication Code, respectively. CCM is only defined for block ciphers with 128-bit blocks.

ChaCha20-Poly1305 is also AEAD, combining the ChaCha20 stream cipher with the Poly1305 MAC for authentication.

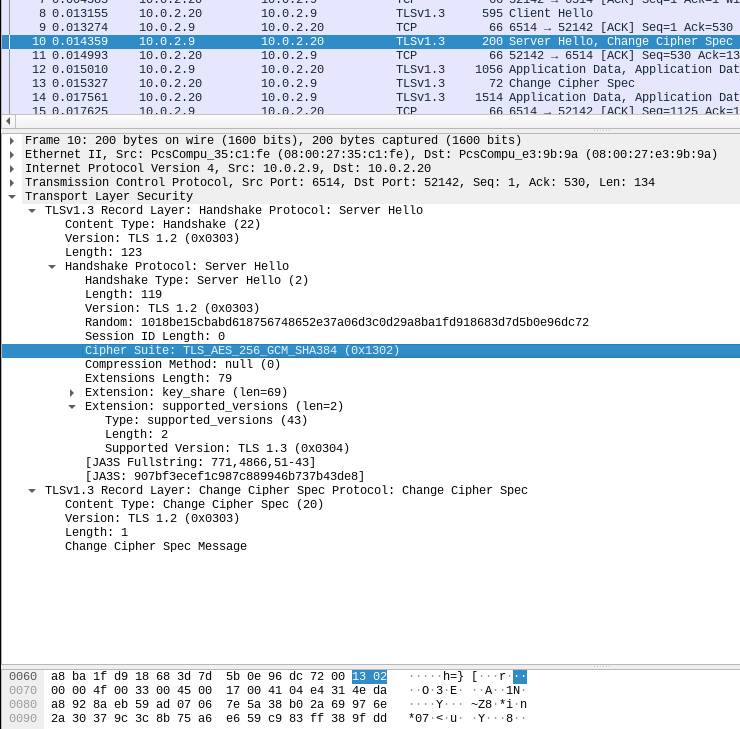

Two packets later, the server sends its

Server Hello message.

It specifies that they will use

TLS_AES_256_GCM_SHA384 or 0x1302

which means it's going to be TLS 1.3,

which it also lists as the only supported version.

After that, the traffic is encrypted.

Now, why do the end points report the encapsulated traffic as TLS 1.2 when it really supports both 1.2 and 1.3, and the server will insist on the strongest mutually supported version?

What I have managed to find about this blames the situation on legacy middleboxes. That is, entrenched designs of firewalls or other filtering devices, reverse proxies, and so on.

Many of them understand explicit references to TLS v1.2 but not v1.3. They have the desired feature set and can handle TLS v1.3 that calls itself v1.2, and replacement would be expensive and disruptive. We can assume that they are out there and will remain in many settings. And so, libraries like GnuTLS will implement TLS v1.3 in a way that these devices accept.

Yes, with a name referencing Star Trek: The Next Generation, which originally aired 1987–1994.