Bob's Blog

Which Programming Language Should I Learn?

Someone recently asked me,

"Which programming language should I learn?"

The answer depends on what you want to accomplish.

On some level it doesn't matter.

But that's a very academic level!

One obvious choice is to use the Bash shell for the overall logic

and the fundamental set of commands —

grep,

sort,

sed,

and so on —

for the functional components.

If you exclusively use Windows,

then the PowerShell command-line interface would be the analogy.

If you're going to be a database administrator,

then you'd better know SQL.

In a

following article

I explain how the command-line interface or the "shell"

is so powerful,

and how it's actually an interactive programming environment.

Then in the

second

and

third

articles in that sequence,

I first show you how to write and customize your

first shell script,

and then how to create a "real world" useful script

to analyze web server logs.

A powerful alternative is Python,

which you could use anywhere.

Or, if you have specific and narrow plans,

you may find that it makes the most sense to

start somewhere else.

I Said That Your Choice Doesn't Matter, But...

If you can solve a problem with one programming language, then you can solve it with any programming language, or at least any of the languages I would expect you to be considering. Here's why.

The German mathematician David Hilbert was enormously influential, presenting a collection of 23 problems that set up much of 20th century mathematical research. But then he tried to organize all of mathematics into a complete and consistent set of axioms. The Austro-Hungarian logician Kurt Gödel proved that Hilbert's goal was logically impossible. It has to be the case that you can use mathematics to make statements that can't be proved by mathematics.

American logician and mathematician Alonzo Church asked, "Well, what about formal logic?" He proved that a challenge posed by Hilbert, to apply logic to answer questions about mathematics, was also similarly limited.

English mathematician Alan Turing extended that into the Church–Turing thesis, developing the abstraction that's now called the Turing machine and proving that claim that if a problem can be solved, it could be solved with any general-purpose computer hardware or language.

David Hilbert — "I want to make all of mathematics complete and consistent."

Kurt Gödel — "Sorry, but that's impossible."

Alonzo Church — "And, it's also impossible for formal logic."

Alan Turing — "Here's further formal proof and a very useful abstraction."

That was a lot of logic over the period roughly 1900 to 1939. We now had formal proofs that:

- Mathematics is "incomplete", meaning that you can pose unsolveable mathematics problems.

- Formal logic is similarly incomplete, you can define a formal logic problem that you cannot solve with formal logic.

- If a formal logic problem can be solved, then it could be solved with the simplest imaginable abstraction of a logical processor and associated processing rules, what Alan Turing described and what we now call a Turing machine.

There wasn't a field called computer science back then, because there weren't computers. All this was in the field of Formal Logic, which had always been part of Philosophy but was then blending into Mathematics.

If a computing system can simulate any Turing machine, then it can solve any problem that can be solved. Today we say that such a system is Turing-complete. We could say this about a processor's instruction set, or about a programming language. Most programming languages, and all of the ones you should be considering, are Turing-complete. (Along with many you should stay very far away from, such as APL, the weirdest language I ever took a class about)

Ahah! All that early 20th century logic proves that it doesn't matter which language we use! Well, not exactly.

Computability means being able to solve the logical problem with unlimited memory and patience. However, just because you can use a particular language to solve a particular problem doesn't mean that you should.

How much time and memory will the program require?

How long will it take you to create the working program?

Think about someone, possibly you, looking at your program a few months from now. Will they be able to easily understand how it works?

And, overall, does the tool fit the task? Is this language a reasonably natural fit to the task?

Some Choices Are Obvious

If you're going to be a database administrator, or a cybersecurity analyst specializing in database exploits and protection, then you must know SQL.

If you're going to be developing operating system components, or network services (e.g., Nginx, Apache, various authentication services), those things are generally written in C plus variants like C++ and C#.

If you're doing web programming, writing programs that are embedded within or referenced from within the web pages, when what's your organization's plan? For client-side programming, code that will run on the client or within the browser, that might be Java or Javascript. For server-side programming, code that runs on the server to dynamically build page content and add interactivity, that might be PHP, Ruby, Python, ASP.NET, Java, or others

If you're going to be a generalized cybersecurity analyst, the targets you want to protect will largely be programmed in C. But they're originally written in C. The reasonably human-friendly source code is converted by compiler programs into machine instructions. The attackers won't helpfully provide their source code, usually you only get the exploits themselves, which are nothing but binary machine instructions. So, certainly C, but also the Intel/AMD instruction sets, and the ARM instruction sets. This is not the easy place to start!

Something Else To Consider — Compiled Versus Interpreted

C is a compiled language.

- You write what's called source code, which should be reasonably easy for you to read and understand once you know the syntax and semantics of the language.

- You use a compiler program to convert that into machine instructions, leaving you with your original source code that you can read, and an executable file that the CPU can run. If the compiler instead reports that you violated the syntax of the language, go back to step 1 and fix your syntax errors.

- You run that executable, observe what happened, then go back to step 1 to fix your logical errors or add parts that you hadn't written yet.

An IDE or integrated development environment such as Eclipse or NetBeans can make the overall process more efficient, but the above is what's going on.

Python is an interpreted language.

- You write your program, which again should be reasonably easy for you or someone else to read and understand.

-

You run that program, which begins with a line

effectively saying

"This is a Python program, so start the

/bin/pythoninterpreter program and have it read and run this." There is no compiler step, the interpreter reads and runs the program directly. If you had made syntax errors, the interpreter will tell you about them and you return to step 1 to fix the syntax. If the syntax was OK but it doesn't do what you hoped for, you return to step 1 to fix the logic.

The interactive command-line environment,

the Bash shell on Linux or

the PowerShell on Windows,

is an interpreted language.

You are typing commands in which you may violate the syntax,

or your requested logic may not have the desired result.

Maybe just interactively entering commands all day

accomplishes what you want.

But you can copy your command history into a file,

insert a starting line that says

"This is a shell script, so start the /bin/bash

program and have it read and run this."

Now you have a working shell script!

The

next article

explains how the command-line environment is

a programming environment if you choose to take advantage of it,

while the

following article.

walks you through creating your first shell script.

Interpreted languages generally lead to faster development. There's one less step in the loop, and some times you are fix both syntax and logical errors at the same time.

Compiled languages can lead to executable programs that run faster. Of course the developers of interpreted languages know about this, so they work to make their interpreters run faster.

I wouldn't worry about the slight speed disadvantage of interpreted languages! You're presumably reading this because you want some help deciding what to learn, and this might be your first language ever. It surely won't be your last one! You aren't going to start with high-performance scientific computing on gigantic data sets. My suggestion is to choose an interpreted language because there's less complexity, no step involving a compiler. That means the Bash shell or Python. You very likely already know some of what would go into shell scripts, while with Python everything is brand new.

I did some Linux work at the University of Alaska Fairbanks, with a group that did high-performance computing on some very large geophysical data sets. Here's their facility in late September.

If you hope to work with cybersecurity, or with web site operations, or with troubleshooting servers, then many of your tasks end up being similar.

You will have one or more log files to work with. These are large text files with one event per line. If these are web server access and error logs, each line has the same format as far as what's in the first, second, and so on fields. Other logs will different types of events with their own specific formats.

Whatever your end goal may be, you solve it by simply detecting or maybe calculating statistics on specific event types. That means finding certain patterns within certain fields of those lines describing specific types of events, and then doing whatever is appropriate with the results. Generating one overall report, storing certain data within various files, sending messages to indicate that an incident was detected, or whatever.

The Linux command-line environment includes several commands that were designed to carry out the individual steps. Therefore, Bash shell scripting seems to me to be the obvious place to start.

What Can I Use To Learn How To Program?

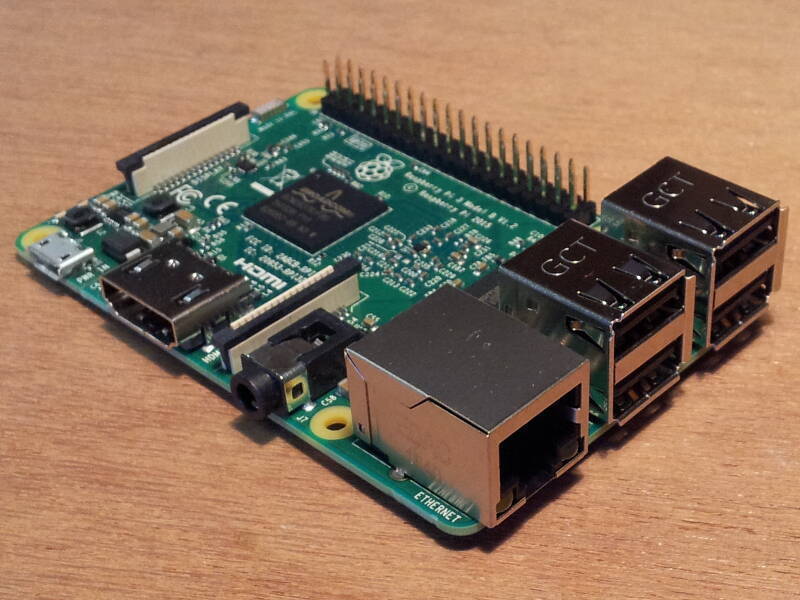

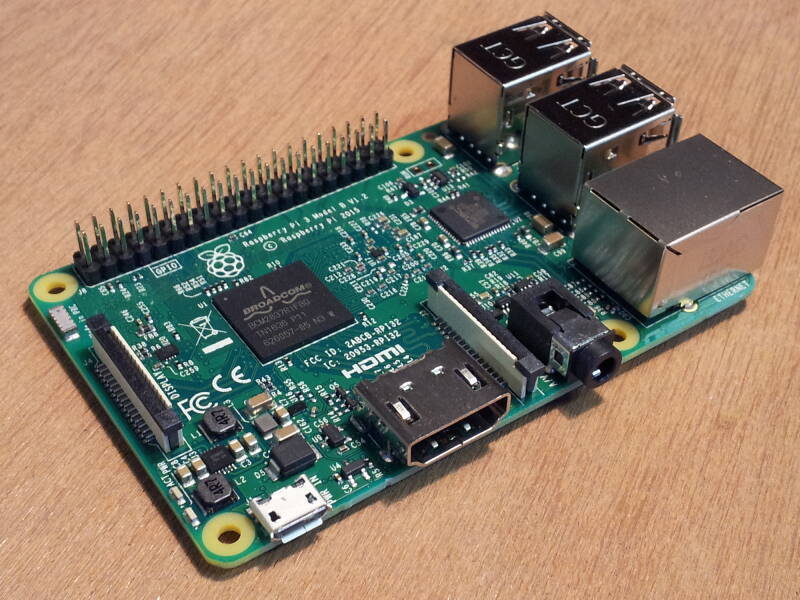

Consider a Raspberry Pi. It was designed to teach British school children how to program in Python. It runs Linux, so you have Bash. And, C/C++, C#, Java, SQL based databases, Ruby, PHP, and on and on.

It doesn't cost very much, and is capable of a great deal for such a small package. The latest models include a quad-core processor, 8 GB of RAM, Gigabit Ethernet, USB 3, WiFi, and Bluetooth. It's about the size of two decks of cards stacked together, and you plug it into any HDMI display.

The project has been supported by the University of Cambridge Computer Laboratory and Broadcom. A co-founder of the project, explained the need for the project in 2012:

"[T]he lack of programmable hardware for children — the sort of hardware we used to have in the 1980s — is undermining the supply of eighteen-year-olds who know how to program, so that's a problem for universities, and then it's undermining the supply of 21 year olds who know how to program, and that's causing problems for industry."

Next:

Why the Command Line Rules

Many tasks are much easier to accomplish from the command line. Some tasks can't be done any other way.

Latest:

The Recurring Delusion of Hypercomputation

Hypercomputation is a wished-for magic that simply can't exist given the way that logic and mathematics work. Its purported imminence serves as an excuse for AI promoters.

Previous:

Cybersecurity Certifications are Unfair

Cybersecurity certifications are not a fair test of knowledge, let alone skill. They have an illusion of relevance and meaning, making more money for the certifying companies.

How Not to Get a Job

In which I stumbled into a teaching job through mistaken identity, with the involvement of a doomsday cult.

Actually there were computers, because "computer" was a job title for a human who did math calculations with pencil, paper, and slide rule. The need for large ballistics tables and rapid cryptanalysis during World War II showed that computing machines were needed.